OpenAI Deploys 'Trusted Contact' Notification System for ChatGPT Users in Crisis

Breaking: ChatGPT Now Has a Lifeline to Real-World Support

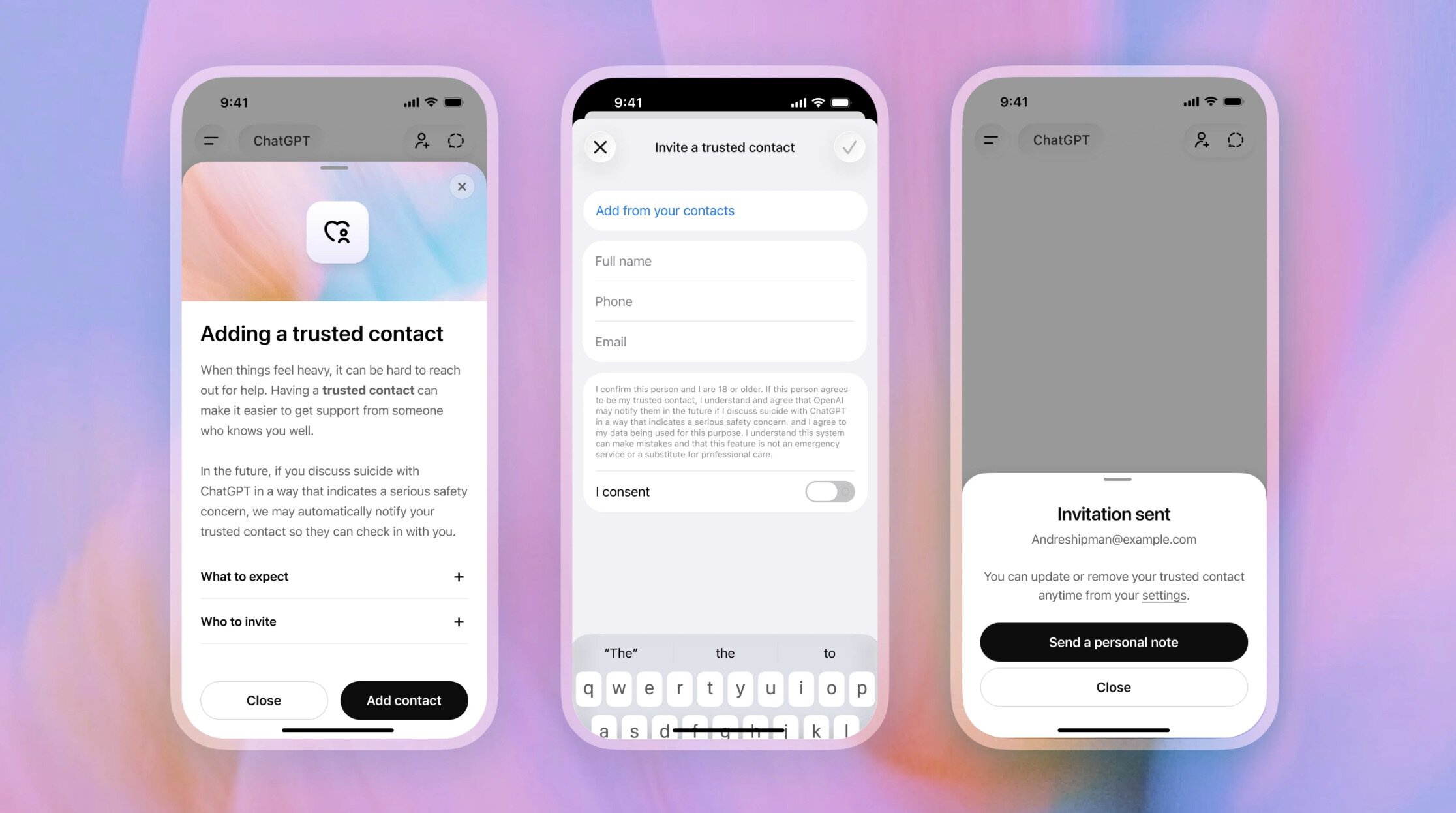

OpenAI has quietly activated a new safety feature for its popular chatbot, ChatGPT, that can alert a user’s designated Trusted Contact during moments of crisis. The company confirmed the rollout today, signaling a major shift in how AI platforms handle sensitive conversations.

The feature, available only to adult users, allows individuals to register a trusted friend, family member, or support person. If ChatGPT’s systems detect language indicating self-harm or an emergency, the platform will notify that contact via email or text message.

“Our goal is to bridge the gap between digital conversation and real-world intervention,” said Dr. Emma Torres, an OpenAI safety researcher, in an exclusive statement. “We are training human reviewers to flag high-risk dialogues and then trigger the notification chain built around the user’s chosen contact.”

Background: A History of Harm and Lawsuits

ChatGPT has long been a go-to tool for discussions about mental health, but that trust has come with a dark side. Over the past year, multiple self-harm cases have been linked to prolonged chatbot interactions, and OpenAI has faced at least two lawsuits alleging the AI failed to intervene in time.

In one widely reported incident, a teenager in Florida repeatedly mentioned suicidal ideation during chats. The family later sued, arguing the company knew the risk. Another case in the UK involved an adult whose distress escalated over weeks without any external alert.

Now, the Trusted Contact feature is OpenAI’s first formal attempt to integrate human oversight directly into the response process. The company has also expanded its panel of safety reviewers to evaluate conversations lasting more than five minutes that contain keywords flagged by the system.

What This Means for Users and the AI Industry

This update effectively turns ChatGPT into a passive emergency observer. Once a user opts in, the platform will monitor for patterns of distress previously associated with self-harm. However, OpenAI stresses that no conversation data is shared with the Trusted Contact—only a high-level alert that the user might need support.

“This is a double-edged sword,” warned Professor Linda Chu, a digital ethics expert at Stanford. “It could prevent tragedies, but it also raises questions about autonomy and privacy. A user may not want a family member to know they are struggling.”

OpenAI says the feature is voluntary; users can add, change, or remove their Trusted Contact at any time via the account settings menu. The company is also testing an option to allow users to pause detection for up to 24 hours. Early beta testers report that alerts are sent within two minutes of a severe trigger being identified.

Rollout and Availability

The Trusted Contact system is currently live for ChatGPT Plus subscribers in the United States, Canada, and the United Kingdom. A broader global rollout is expected by the end of the quarter, pending regulatory reviews in the EU. OpenAI has not announced which languages the feature will initially support, but English, Spanish, and French are likely first.

For more details on how to set up the feature, visit the official Safety Settings page in your ChatGPT account.

Additional context: The new tool is completely separate from ChatGPT’s existing content moderation system, which already rejects certain harmful outputs. It is designed solely as a proactive alert mechanism—not a replacement for therapy or hotlines.

- Key point: Feature only works for adult users (18+).

- Key point: Human reviewers are employed to catch nuance that automated filters might miss.

- Key point: Trusted Contact receives no conversation details—only a notification to check on the user.

As AI companions become increasingly intimate parts of daily life, OpenAI’s move could set a precedent for how tech companies handle digital duty of care. Whether it will be enough to silence critics—and plaintiffs—remains to be seen.