OpenAI's GPT-5.5 Drives NVIDIA's Codex to 'Mind-Blowing' Efficiency Gains

Breaking: More than 10,000 NVIDIA employees across engineering, product, legal, marketing, and other departments are now using OpenAI's latest frontier model, GPT-5.5, through the Codex coding agent — and early results show a dramatic transformation in developer workflows.

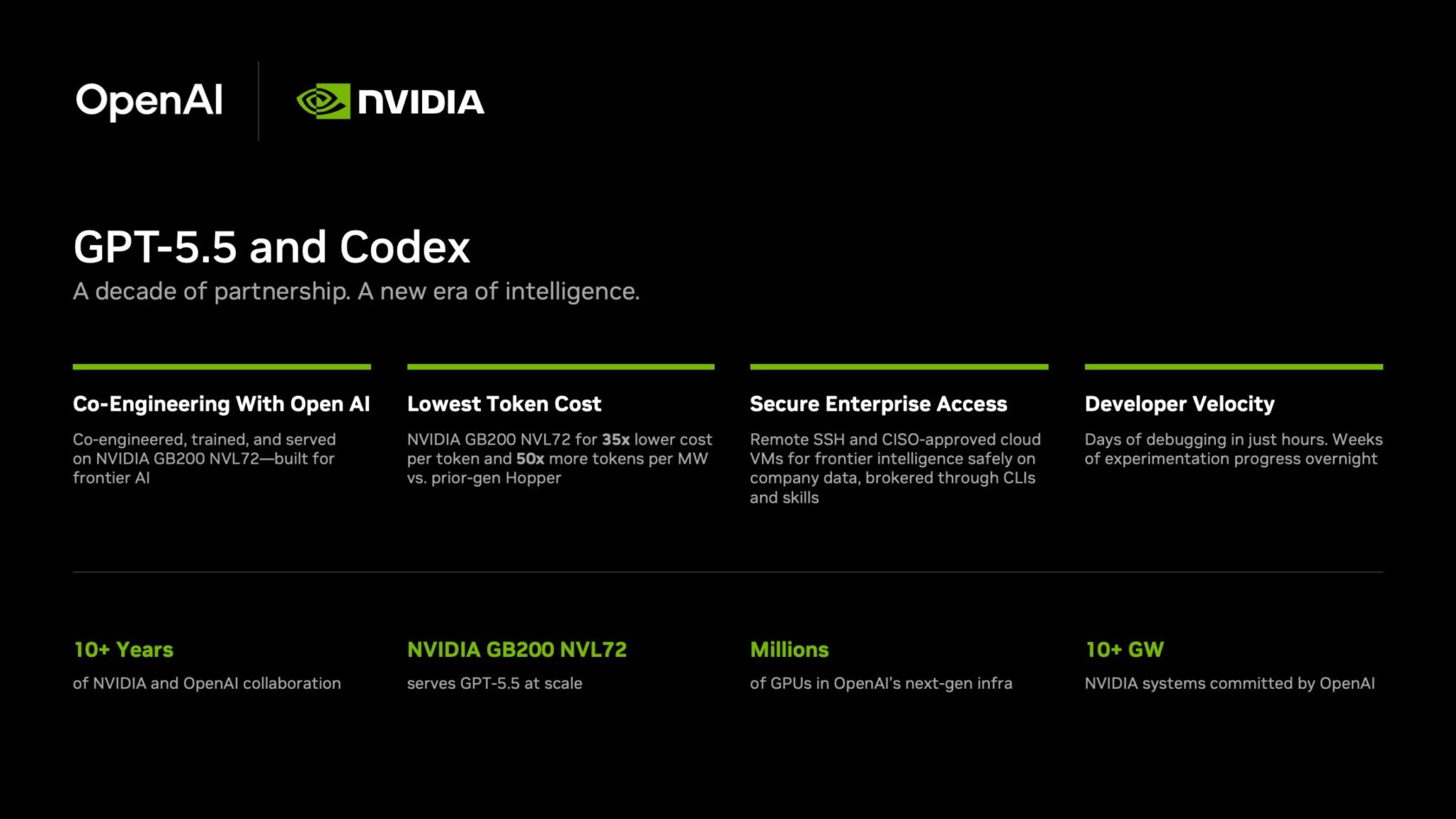

Powered by NVIDIA's GB200 NVL72 rack-scale systems, GPT-5.5 delivers a 35x reduction in cost per million tokens and 50x higher token output per second per megawatt compared with prior-generation infrastructure. These economics make frontier-model inference viable at massive enterprise scale.

"Mind-blowing" and "life-changing" are how NVIDIA employees describe the shift. Debugging cycles that once stretched across days now close in hours. Complex, multi-file codebase experimentation that previously required weeks can be completed overnight. Teams are shipping end-to-end features directly from natural-language prompts with unprecedented reliability.

NVIDIA founder and CEO Jensen Huang urged all employees to adopt Codex in a company-wide email: "Let's jump to lightspeed. Welcome to the age of AI."

Background

The GPT-5.5 launch and Codex rollout are the latest milestones in a partnership spanning over a decade between NVIDIA and OpenAI. The collaboration began in 2016 when Huang personally handed a DGX-1 supercomputer to the OpenAI team — a moment that marked the start of a deep, full-stack relationship.

Since then, NVIDIA has worked with every frontier model company to accelerate AI agents, reduce inference costs, and improve power efficiency. The GB200 NVL72 system represents the current pinnacle of that engineering effort, purpose-built to serve models like GPT-5.5 at enterprise scale.

Enterprise Security Built In

Each agent requires its own dedicated secure environment. NVIDIA's IT team rolled out cloud virtual machines (VMs) for every employee, providing a sandboxed computing environment with full auditability.

The Codex app supports remote Secure Shell (SSH) connections to approved cloud VMs, allowing agents to work with real company data without exposing it externally. A zero-data retention policy governs the deployment, and agents access production systems with read-only permissions through command-line interfaces and Skills — the same agentic toolkit NVIDIA uses for internal automation workflows.

What This Means

AI agents have moved beyond simple coding assistance into true knowledge work: processing information, solving complex problems, generating new ideas, and driving innovation. GPT-5.5-powered Codex demonstrates that frontier models can now be deployed safely and cost-effectively inside the world's most demanding enterprises.

The combination of dramatically lower inference costs, higher throughput, and robust security — including SSH tunneling and zero-retention policies — sets a new standard for how companies can harness AI agents without compromising on data governance.

As Jensen Huang's call to "jump to lightspeed" echoes through NVIDIA, other enterprises are likely to follow. The age of AI-powered knowledge work has arrived — and it's running on NVIDIA's own hardware.